A sitemap is a resource you create to provide information to search engine crawlers on the pages, videos and files that make up your website to enable discovery by Google and other search engines.

As a normal course of business, search engine web crawlers, also known as bots, robots or spiders, crawl a website’s pages to retrieve content. A search engine’s database does not reflect all information on the internet. Instead, it reflects all the information on the internet that that search engine has crawled and indexed. The content that crawlers retrieve from your site is what forms a given search engine’s index, which is what they use to return search results.

A sitemap provides search engines a direct list of URLs to your content, improving a crawler’s ability to find your pages, as it no longer relies solely on your page’s relationship to other referring pages within your site and on the wider web. It’s one of the best way to ensure that Google can see all of the pages on your site, or at least all of the pages that you want them to see.

What is a sitemap?

There are two types of sitemaps: user sitemaps and sitemaps for search engines. A user sitemap is a page on a website that helps users navigate it by listing and linking to important areas and content.

User sitemaps can be a useful way for visitors to quickly and easily find the information they need. Ideally, however, your website shouldn’t have to rely on a sitemap to improve overall user experience. Clear user menus and breadcrumb navigation should allow users to navigate content without having to reference a map of the website to orient themselves. User sitemaps tend to be in html format. You may still see a link to a user sitemap on older websites.

Search engines on the other hand, still rely strongly on sitemaps. Since they have limited time (or crawl budget) to index a site, search engine crawlers like Googlebot first read the sitemap file, so that they’re better able to intelligently crawl your site. These sitemaps are in xml format and designed strictly for bots (this means they don’t look particularly pretty). A sitemap can usually be located at:

www.yourdomain.com/sitemap.xml

A search engine sitemap should detail all important URLs and exclude any pages with robots instructions not to index a page.

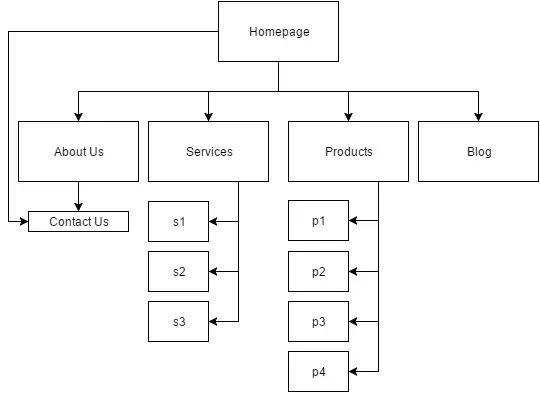

A search engine sitemap is a highly important aspect of any SEO campaign, because it reduces the chances of search engine crawlers overlooking new or recently updated content. Your sitemap connects the dots from page to page to page, and dictates the hierarchy of each of those pages and the importance of the page relative to other URLs within your site. It’s easiest to think of it as a flowchart:

For most sites, your navigation and internal links will be more complicated than this, which is why it’s lucky a sitemap doesn’t have to be generated manually — your content management system (CMS) or a number of third-party tools can auto-generate one for you. Still, it’s important to be aware of your site structure: A well optimised information architecture — organisation of subdomains and folders and created with content silos in mind — allows search crawlers to take the path of least resistance through your site.

Your sitemap can also provide valuable metadata associated with the pages you list in your sitemap: Metadata is information about a web page, such as when the page was last updated, how often the page is changed and how it relates to other pages on your site. You can also use a sitemap to provide Google with metadata about specific types of content on your pages, including multimedia content. It’s all about feeding search engine crawlers the information they need to understand your website. The more they understand, the more likely you are to rank highly.

Why do you need a sitemap?

If your website pages are properly linked, search engine crawlers can usually discover most of your site without one. But a sitemap can improve the crawling of your site if your site is really large, contains an archive of content pages that are isolated or not linked well to one another, your site is new and has few external links to it (read more on this in link building), or uses multimedia and hard-to-parse content a search crawler might not otherwise be able to ‘read’.

To get an idea of what Google sees from your site, perform the following Google search:

Site:yourwebsite.com

By putting “site:” in front of your domain name you will be requesting Google to list the pages Google has indexed for your site. If you see fewer results than you expect, it means there’s something about your site structure that’s blocking the Googlebot from ‘seeing’ your site.

This goes both ways:

Creating a sitemap applies too if there is content on your site you prefer not to register in search. Say, duplicate content that might be considered spammy, or a news page that competes in search with your homepage. If you create a website without providing a sitemap, search engines will attempt to find and index all the content on your site unless you specifically both exclude these from your sitemap and place instructions on the pages to noindex them. The contents of your robots.txt file can also tell crawlers which parts of a website they should and should not visit.

Submitting your sitemap to Google

Sitemaps can be submitted to Google via the Search Console Sitemaps tool. This further encourages Google’s crawlers to reference your sitemap when it visits.

Google supports several sitemap formats, including XML, RSS, HTML and text files, but it must be UTF-8 encoded. You can submit multiple sitemaps and/or sitemap index files (if your site is large and requires multiple sitemaps).

Using a sitemap doesn’t guarantee search engines will crawl and index all the items as and how you tell them to, but your site will benefit hugely and you’ll never be penalised for having one.